Background

Predicting the next clinical event from electronic health record (EHR) sequences — identifying both the event type and the time to its occurrence — is a fundamental challenge in clinical decision support. We present a large-scale comparative evaluation on MIMIC-IV v3.1, introducing Cadence, a novel neural model grounded in the Narrative Velocity (NV) feature-engineering framework.

Methods

We evaluated seven model families on identical data splits from MIMIC-IV v3.1: Cadence (ours), XGBoost-884, FT-Transformer, Random Forest, Logistic Regression, LSTM, and RETAIN. All models predict next-event type (50-cluster vocabulary) and time-to-next-event jointly. Cadence is a 5.86M-parameter residual MLP combining 884 Narrative Velocity features with two PubMedBERT text embeddings, trained with self-knowledge distillation and MixUp augmentation in a single forward pass. No ensemble, no competitor-model distillation, no external teacher. Reporting follows TRIPOD+AI guidelines.

Findings

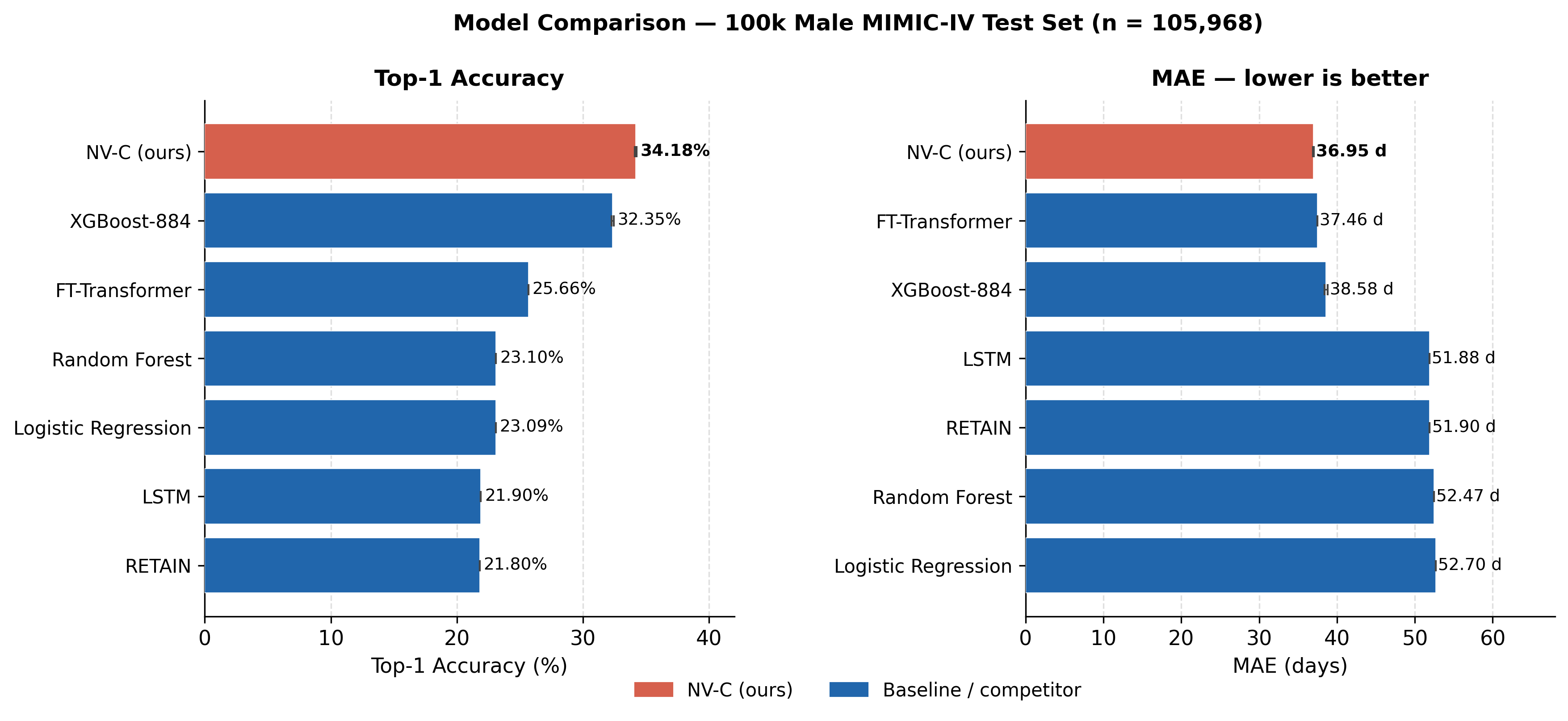

At the 100k training tier (male cohort), Cadence achieves the best top-1 accuracy (34.18%) and best MAE (36.95 d) across all seven models. At full-cohort scale (810,988 male / 999,497 female training sequences), Cadence achieves the best top-1 accuracy on both sexes (38.04% male, 35.66% female). Cross-seed standard deviations are ≤0.08 pp top-1 and ≤0.09 d MAE.

External Validation

Cadence was evaluated on 1,120 patients from Brigham and Women's Hospital (BWH). Under pathological domain shift (Jensen–Shannon divergence 0.27), Cadence retains the highest accuracy (27.58%) with the smallest degradation (−6.67 pp) among all models, suggesting the PubMedBERT backbone provides a domain-stable representation.